RESEARCH:

THIS DREAM weaves REALITY

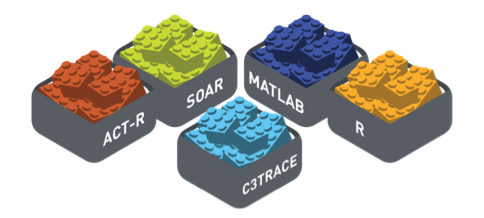

In training exercises and simulations, computer systems actually “predict” how users might respond in a scenario. This allows the computer to respond to the trainee’s actions during the simulation. However, various systems rely on many different human performance models in making their predictions. DREAMIT is focused on creating a standardization across these models. Think of it as building a common set of blueprints for how human performance models work with training simulation software to improve the realism and accuracy for training.

A WORKSPACE FOR MODEL INTERACTION

Project Details

Proposal Title: DREAMIT: Design, Reconfigure, Evaluate Autonomous Models in Training Agency: United States Air Force Contract Number: FA8650-15-M-6662 Start Date: 2015

The proliferation of autonomous and human-machine systems drives the need for new tools to support system design and evaluation. Key among these are simulation testbeds enabling interactions between multiple warfighters and military systems. Equally important are methods for creating and integrating intelligent agent models into those simulation environments. That’s where the DREAMIT solution comes in.

How We Did It

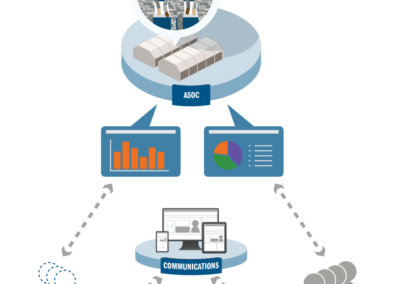

This solution is designed to advance the state of the art in human-machine systems, including the efficiency of developing intelligent agent models, the integration of agent representations into simulation environments, and the speed of evaluating human-machine systems using simulation environments. DREAMIT centers on two concepts. The first is to combine multiple intelligent agent models, specified at different levels of abstraction, within the simulation environment. By leveraging different levels of abstraction, agent models will produce behavior at the desired levels of fidelity without incurring undue computational complexity and cost. The second concept is to create a virtual data loop. The aim is to capture data from training simulations, apply computational methods to the data to facilitate agent development, and close the loop by gathering additional data after reembedding agent models in the simulation environment.

The major benefits of DREAMIT are: (1) reconfigurability, in terms of the exercise scenario, and the live and simulated players; (2) adaptability of intelligent agent models based on training history and encountered tactics; (3) anticipation of Red and Blue Force responses to new tactics based on intelligent agent models; and (4) efficient and economical evaluation of alternate system designs. These features makes DREAMIT applicable in other domains, military and commercial alike, where flexible scenario construction and adversary prediction are needed for training; for example, for emergency responders, and in security response coordination.